large-Scale Tv Dataset

Multimodal Named Entity Recognition

(STVD-MNER)

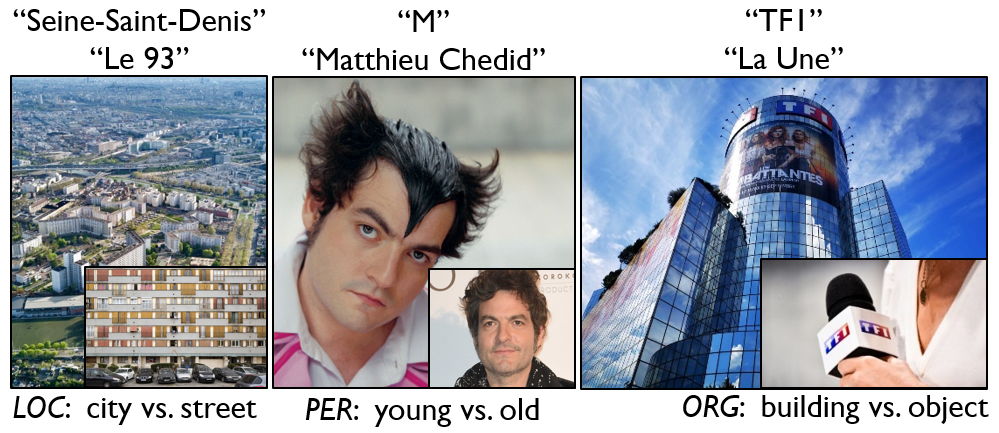

The STVD-MNER dataset is designed for the Multimodal Named Entity Recognition (MNER) task. MNER is a well-established research problem in Computer Vision (CV), Audio Signal Processing (ASP), and Natural Language Processing (NLP). It leverages visual, audio, and textual information to detect Multimodal Named Entities (MNEs) and determine their corresponding types (e.g., location, person, organization). The following figure illustrates examples of MNEs with various representations and entity types. As part of the STVD collection, the dataset STVD-MNER originates from television content, including information from Electronic Programming Guides (EPG) and TV audio-visual (A/V) streams.

The STVD-MNER dataset is currently released in a β version, which includes a Hello World test set. This test set contains approximately 820 hours of audio-visual content linked to around 9 thousand textual Named Entities (NEs). These NEs have been extracted from web sources.

In this setting, the MNER task must be performed from scratch

relying on the provided lists of NEs, the transcripts extracted from audio streams, and the associated audio-visual data.

A Full test set is planned for a future release and is expected to cover several thousand hours of audio-visual material.

The dataset is structured as follows:

- a root directory for each TV channel,

- within each TV channel directory, multiple collection subdirectories are provided,

- each collection subdirectory contains a set of JSON files describing the lists of NEs and their corresponding types,

- each collection subdirectory includes daily subdirectories that store files related to broadcast events, such as EPG data, audio, transcripts, and video. The EPG information is distributed as CSV files summarizing the original XMLTV data. Audio and video streams are encoded using the MPEG-4 format. The Transcripts are available in both SRT and TXT formats, provided with and without timestamps.

The dataset follows the naming convention described below.

CX |

/ |

NameCode |

/ |

NEs_list_imdb.json |

||

NEs_list_stvdkgstr.json |

||||||

NEs_list_stvdkgall.json |

||||||

day |

/ |

ts_epg.csv |

||||

ts_video.mp4 |

||||||

ts_audio.mp4 |

||||||

ts_transcript_wt.srt |

||||||

ts_transcript_wt.txt |

||||||

ts_transcript_wb.srt |

||||||

ts_transcript_wb.txt |

||||||

ts_transcript_ws.srt |

||||||

ts_transcript_ws.txt |

||||||

ts_transcript_wm.srt |

||||||

ts_transcript_wm.txt |

||||||

ts_transcript_wl.srt |

||||||

ts_transcript_wl.txt |

-

CXdenotes the directory name corresponding to a TV channel, whereCXtakes one of the following labels:{France2, France3, France5, C8, ARTE, W9, TF1}. -

NameCoderefers to the name of a subdirectory containing a collection. It is represented using a normalized ASCII format composed of lowercase charactersa-z, digits0-9, and the underscore symbol_(e.g.le_grand_betisier). TheNameCodeis standardized to a maximum length of80characters. -

NEs_list_Xdenotes the labels used to name the files containing lists of NEs, whereX ∈ {imdb, stvdkgall, stvdkgstr}. IMDb and STVD-KG correspond to the two web sources used for extracting the NEs.{stvdkgall, stvdkgstr}result from the application of two parameter settings,ΔKGandΔA/V, as described below. For the sake of consistency, the three files are provided for every collection, even when none data appears in the web sources. In such cases, the corresponding files are delivered with empty content. -

dayandtsare two timestamp formats used to name the subdirectories and files associated with broadcast events.dayfollows the template |YEAR|MONTH|DAY| (e.g., 20250529).tsfollows the template |YEAR|MONTH|DAY|_|HOURS|_|MINUTES| (e.g., 20250529_09_55), corresponding to the date and starting time of a broadcast event. -

The subdirectories containing broadcast events are labeled

day. -

The EPG files are labeled

ts_epg. -

The audio and video files are labeled

ts_audioandts_video, respectivly. -

The transcripts are generated by the

Speech-To-Text (STT)

Whisper

API using five transcription models:

{tiny, base, small, medium, large}. The files are labeledts_transcript_X, whereX ∈ {wt, wb, ws, wm, wl}for each of the models. Each transcription is provided both with and without subtitles, in SRT and TXT formats, resulting in 10 transcript files per audio file.

For understanding and testing purposes, a selection of samples (NEs and types with their associated audio, textual and video segments) is provided in the following table.

| NE | Type | Audio | Text | Video | |

| sample 1 | Gibbs | PER | 1_a | 1_t | 1_v |

| sample 2 | Saint-Denis | LOC | 2_a | 2_t | 2_v |

The dataset is available for non-commercial research purposes only.

To access the dataset, users must first download the agreement

(available in English or French ),

complete and sign it, and then send a scanned copy by email

to Mathieu Delalandre ![]() .

After reviewing and validating the request, we will provide the password required to extract the dataset.

.

After reviewing and validating the request, we will provide the password required to extract the dataset.

The dataset can be downloaded from the table below, which also presents general statistics. For easier distribution and access, the dataset is split into several archive packages of 16 GB each. The storage service at UT typically offers download speeds between 3 MB/s and 16 MB/s, depending on connection speed and concurrent access. As a result, downloading each package usually takes between 30 and 45 minutes. All packages must be downloaded to extract the complete dataset.

| Duration (h) | Channels | Collections | NEs files | NEs | EPG files | A/V files | Transcripts1 | Size (GB) | Packages | Link |

| 819 h | 7 | 284 | 284 x3 | 9,256 | 843 x1 | 876 x2 | 843 x10 | 280 | 18 | download |

110 additional inconsistent files appear. They are listed here and will be removed in a future release.

For clarity, we detail here technical and scientific aspects of the STVD-MNER dataset. Further information can be found in the research papers [1,2].

-

NEs and types:

were extracted using a robust approach [1] based on Named Entity Recognition (NER)

and Named Entity Linking (NEL) to build-up the

STVD-KG

Knowledge Graph from EPG data (eg. xmltvfr.fr).

The STVD-KG Knowledge Graph is linked to the Web database IMDb.

In both STVD-KG and IMDb, NEs are categorized into three types:

{PER, LOC, ORG}, corresponding respectively to person, location, and organization. The time intervals of the collections differ between the KG and the A/V data, such thatΔKG ≫ ΔA/V. Queries within the KG were therefore performed using these two intervals to obtain both overall and strict extractions of NEs. The following table presents statistics on the extracted NEs according to the two sources (STVD-KG and IMDb) and the two extraction methods (overall and strict). This process produces three lists of NEs:{imdb, stvdkgall, stvdkgstr}, each distributed across the three categories{PER, LOC, ORG}. For clarity, we also report the unionUnion = imdb ∪ stvdkgall(withstvdkgstr ∈ stvdkgall). Note that somePERentities may appear in duplicate across the two sources{imdb, stvdkgall}, why the result is described as an union.

NEs appear at different levels within the A/V collections and follow a near-exponential distribution. A subset of the dataset (PER LOC ORG Total imdb2,929 0 0 2,929 stvdkgstr326 206 7 539 stvdkgall3,732 2,496 99 6,327 Union≤ 6,661 2,496 99 ≤ 9,256 ≈⅓) accounts for the majority of NEs (≈85%). The remaining collections contain few or even no named entities. For clarity, the fileallprovides a detailed analysis of the distribution, which is summarized in the following table.Collections Duration NEs Min Mean Max 284 819.1h 9,256 0 32.6 893 -

A/V data: were captured following the methodology described in [2] with adaptations.

The audio streams were encoded at

256 kbpsas supported by the channels of the French DTT. The video streams were encoded at1.6 Mbpsin SD resolution (720×576, 30 FPS) to achieve a balanced trade-off between visual quality and storage cost. As in [2], specific parameters were applied to map the captured A/V streams to collections. In this process, the start and end times of a broadcast event (t0and endt1) were adjusted to extended boundaries (t0-,t1+). Since the A/V capture on the TV Workstation [2] is asynchronous, each capture session was limited to5×4hsegments per day in order to minimize latency between the audio and video streams. This latency is defined asL=dA-dV, wheredAanddVdenote the audio and video durations, respectively. The video duration (dV), obtained via hardware encoding, corresponds to the real-time. Consequently, the latency is negative, withL ∈ ]-19,-13.9[seconds (seelatency). It can be modeled as a linear functionL(t)withL(t=4h)=Lmin=-19seconds. The distribution ofL(t)is approximated as uniform, with an average value=Lmin/2=-9.5seconds and an error margin∓ ε =|Lmin|/2=9.5seconds. Consideringt0andt1as the timestamps of an audio segment, the corresponding mapping to the video segment is defined byt0+|Lmin|/2-ε=t0andt1+|Lmin|/2+ε=t1+|Lmin|. -

STT: the transcript files have been generated using

the Whisper STT suite

with 3 models

{tiny, base, small}. They are provided as SRT and TXT files with and without the timestamps. The STT requires a huge computation time. To process then=843audio files of the dataset (having a total duration of819h) a parallel processing have been deployed. This uses a high performance computer (DELL 5820 computer, CPU Intel Xeon W-2295,kmax=36threads, 256 GB of RAM, 36 TB of disk capacity) and a multithreading implementation (a threading parameterkis fixed for each model{tiny, base, small}, where each thread[1, k]processes in batch≈ n/kfiles). The optimumkparameters have been fixed for every model{tiny, base, small}from the interpolation of Throughput (Th) curves (x=k, y=Th) preventing the CPU/memory trashing. The overallThis derived from the multithreading processing and computed as withDithe duration of the audio data to process by the threadiandRTiits response (or execution) time. For time synchronization, it is important to have an equal duration of audio data to process per thread such asDi ≈ 819h/k. This is known as the partitioning problem intokequal sum subsets that is NP-hard with a complexityO(kn)(e.g. withk=36andn=850we haveO(kn)>10103solutions). It can be solved with different algorithms like the Greedy or Backtracking resulting in aRTvariation of≈5℅between thekthreads. Based on these different mechanisms, the obtained optimumThfor the models{tiny, base, small}are given here with, as well, the overall times to process the dataset.

tiny |

base |

small |

|

Th |

42.5 | 30.9 | 11.9 |

Dmax |

19.3h | 26.5h | 68.8h |

- H.G. Vu, N. Friburger, A. Soulet and M. Delalandre. stvd-kg: A Knowledge Graph for French Electronical Program Guides. International Conference on Web Information Systems Engineering (WISE), 2025.

- F. Rayar, M. Delalandre and V.H. Le. A large-scale TV video and metadata database for French political content analysis and fact-checking. Conference on Content-Based Multimedia Indexing (CBMI), pp. 181-185, 2022.